How correct does a chatbot need to be?

Why people don't judge bots the same way as humans

Humans are fallible, it’s one of our best traits. Some wonderful inventions came about as the result of mistakes.

In customer service, mistakes are regrettable, but they have become a fact of life. The difference in First Time Right score between a brand new employee and an experienced representative can be as much as 20 percentage points. With high staff turnover in many contact centres, that can result in a lot of unhappy customers.

AI bots offer the promise of better and more consistent quality, but a bot is still dependent on the quality of the knowledgebase it is drawing from, and Generative AI bots run the risk of producing hallucinations if their inputs and outputs are not sufficiently controlled.

Both humans and bots can be fallible, for different reasons, but we are much less forgiving if a bot makes an error. This can result in:

High profile negative publicity - like DPD’s highly self-critical bot

Costly legal claims - as experienced recently by Air Canada

Why do we hold AI to a higher standard than human beings and other self-service channels?

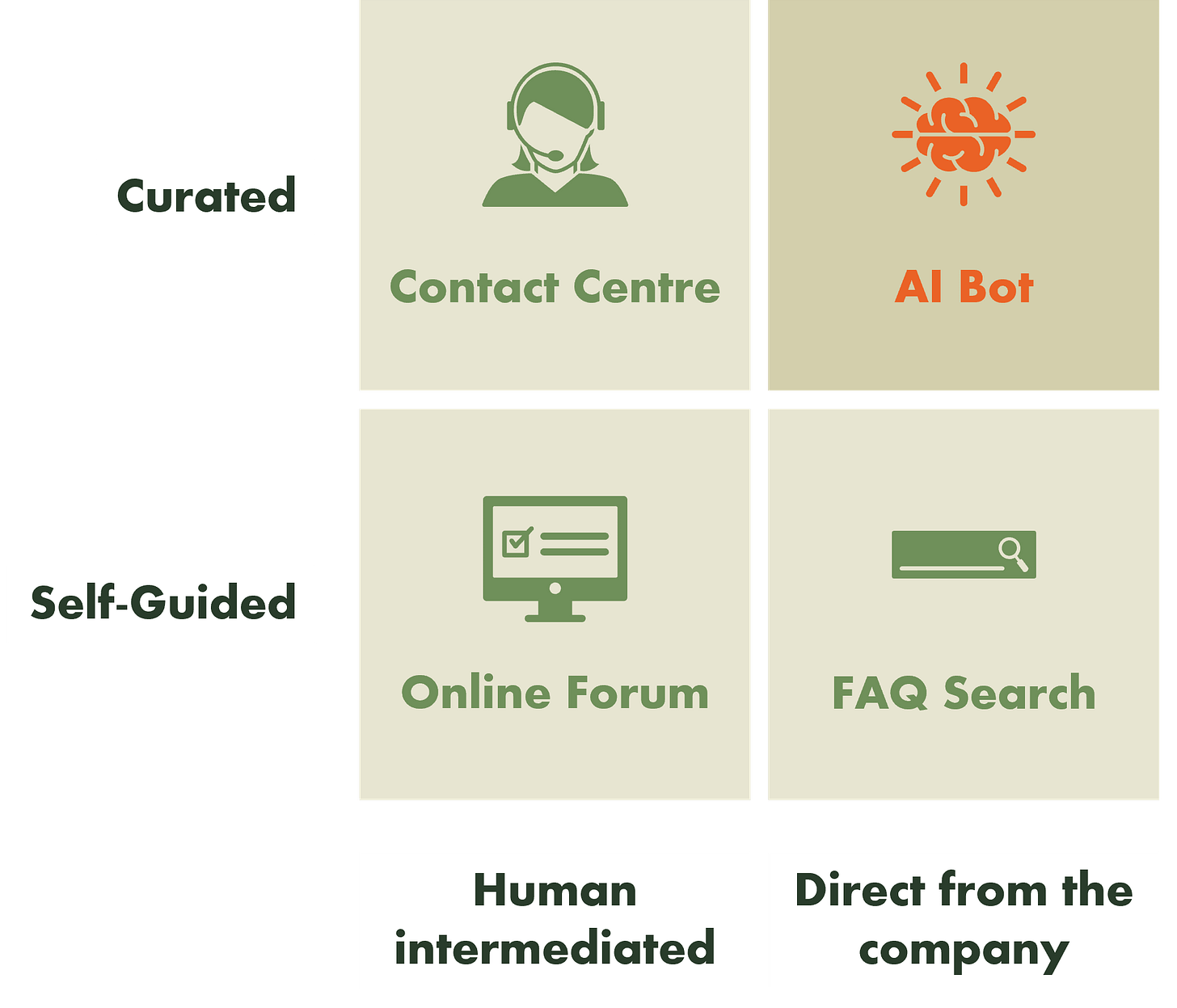

This diagram can help us to understand customer perceptions.

AI bots are unique because they provide information that is curated on behalf of the customer, and they provide this information directly without any human intermediation.

Curated vs. Self-Guided

When using self-guided service channels, like FAQs and online forums, customers are more diligent. They check multiple sources and make sure they have fully understood the issue before proceeding.

With curated channels, like a contact centre or an AI chatbot, customers trust that the diligence is being carried out on their behalf. They expect that the answer has already been cross-checked for accuracy.

Direct vs. Human intermediated

When information is presented directly from the company, without any human intermediation, customers perceive that the information is more formal and official. If they ask the same question twice, they will likely get the same answer, so they have a higher expectation of accuracy.

So how accurate should a bot be, and how can companies get this right?

My experience has been that a bot doesn’t need to be perfect, but it does need to be consistently accurate every time a quantifiable promise is made to a customer. For example (non-exhaustive):

A financial amount: price, credit card APR etc.

A unit of time: installation date, length of warranty etc.

Other quantifiable measures of quality: broadband speeds, range of an electric vehicle etc.

That last category is interesting, because here you might not need to be 100% precise, but it is important to be consistent, even if providing a range of values.

There are two main ways that companies can control this:

1. Control the inputs

The obvious starting point is to make sure that the knowledgebase is correct, but this may not be a trivial task when there are thousands of knowledge articles. Some companies have started to redeploy second line customer service reps to take on the role of checking and rewriting knowledge content. You can also use Generative AI to analyse and highlight discrepancies in existing materials.

A more severe version of controlling inputs is to prevent customers from asking questions about sensitive topics, and re-routing these to a human straight away.

2. Control the outputs

An emerging, and more sophisticated approach is to monitor and control the outputs of an AI bot before they are presented to a customer. This can involve forcing the bot to use a predictable deterministic algorithm in response to certain questions, and checking the outputs against the assured knowledgebase, with a risk-based scoring mechanism.

Whatever the approach, the most important thing is to ensure that bot development is highly transparent and coordinated between Customer Service, Customer Experience, Digital and Technology teams, so that a consistent experience can be assured.

Hopefully obvious but I should flag that none of this is a legal opinion - I’m talking about customer perceptions and expectations

Recommended news articles

If it feels like scams are thick on the ground - it’s because they are

How Generative AI can put a human touch back into customer service

Google ‘Talk to a Live Rep’ brings Pixel’s Hold for Me to all Search users

Latest perspectives from BCG

GenAI needs pricing strategies to match its potential

Imaginative use cases, rapid technological progress, and boardroom drama have dominated the headlines about generative artificial intelligence (GenAI) since the launch of ChatGPT in November 2022.

Largely absent from these conversations, however, is a discussion about how software companies and GenAI application developers should set the right pricing strategy and pricing models, two critical elements that determine the long-term trajectory of any new technology.

Spot on, I often see leaders concerned due to the negative news headlines of AI Chatbots going rogue (Profanity, insulting or ‘lying’), and my hypothesis is this is due to poor design/implementation - the criticality of RAI.

Two questions that may benefit other readers:

(1) How much is this due, in your opinion is to poor design / build?

(2) Besides the deterministic ‘vetting’ algorithms, what else can businesses do to ensure they prevent these issues.